Best Feature

Why Choose Wakmate AI

Free Pilot

98%+ accuracy guarantee

20X faster data labelling and annotation

Discounts for longer projects

24/7 chat support

Wakmate AI

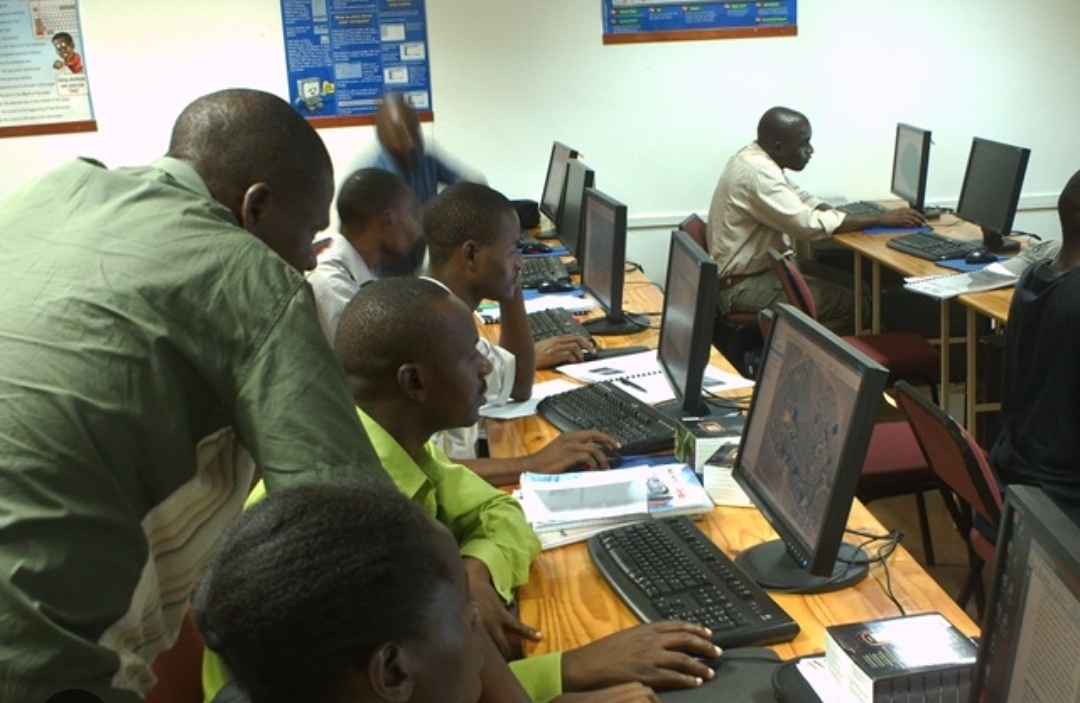

Data Annotators for All Industries

Wakmate AI focuses on AI data labelling and annotation, ensuring your models and automations run as expected. We bring humans into the loop to eliminate errors in your data. We vet, recruit, rigorously train and test our Data Annotators to prepare them for all AI data annotation projects. We have a big team of annotators in different industries, ensuring that we meet any project requirements.

- Highly-trained Data Annotators

- 98%+ quality and satisfaction rate

- 100% confidentiality guarantee

Why Wakmate AI

Wakmate AI

About Wakmate AI

Wakmate AI focuses on AI data labelling and annotation, ensuring your models and automations run as expected. We bring humans into the loop to eliminate errors in your data. We vet, recruit, rigorously train and test our Data Annotators to prepare them for all AI data annotation projects. We have a big team of annotators in different industries, ensuring that we meet any project requirements.

Book Free Pilot150+

Annotators

8+

Services

5000000+

Items Annotated

Our Faq

Explore Our Informative FAQ Section

We have 0% tolerance for any project information leaks. This is one of the points of emphasis during the training period of our specialists and generalists. We assure you that your project details will not leak to any third parties.

We sign NDA contracts with our specialists and generalists, and we keep remininding them about this serious policy

At Wakmate AI, we combat Annotator Drift using "Gold-Standard Insertion." We periodically inject "control" data (pre-verified samples) into our workers' queues without their knowledge. If an annotator's performance on these control samples dips below the threshold, they are automatically flagged for recalibration. This ensures that the "Helpfulness" and "Safety" standards we set on Day 1 are identical to those on Day 100.

Absolutely. We don't just act as a "passive" labeling force. Through our Active Discovery Workflow, our senior annotators are trained to tag "Edge-Case Ambiguity." If our team encounters a data point where the current guidelines are insufficient or the model's output is strangely inconsistent, we flag it as a "Boundary Case." We then provide you with a weekly Strategic Edge-Case Report, helping your research team identify where the model's reasoning is brittle, which often informs the next iteration of your architecture or training set.

We are always here and ready to respond to any issues that you may have pertaining to your project quality and solve it as soon as possible. Just reach us via any of the available channels that is convenient for you and we will sort the issue immediately

You can reach us via any of our contacts or chat and we will discuss your project.